Generative AI & the College Classroom

Generative AI & the College Classroom

This guide was prepared by Joscelyn Jurich, (CEP Graduate Assistant) and Melissa Wright, with important editorial and technical feedback from Elana Altman (ATLIS), Ahmed Ibrahim (CEP), and Tristan Shippen (ATLIS).

Educators are acting fast to consider the implications of ChatGPT and several other generative artificial intelligence (AI) tools. The following recommendations represent the CEP’s and ATLIS' ongoing research into generative artificial intelligence and its implications in the higher education classroom, with recommendations for classroom activities, assignment design, and academic honesty and ethical considerations regarding the potential risks of this technology. This faculty guide accompanies the CEP's Student Guide to Generative AI.

It is important to note that this technology is evolving rapidly, and our collective understanding of what it does and how it works is continuously growing with its exponential development. As such, the CEP has consulted with ATLIS and the Computational Science Center (CSC) and continues to conduct additional research to update these recommendations.

Please contact us at pedagogy@barnard.edu if you have any feedback or questions about this resource. We are also happy to set up one-on-one consultations with instructors to discuss generative AI in their course and disciplinary contexts.

Recommendations for Navigating Generative AI

If you’re considering using generative AI or ChatGPT in the classroom, or want to discuss these technologies with your students, it is helpful to know a little bit about what these tools are and how they work. Generative AI is a type of artificial intelligence that produces new content by learning patterns from existing data. This learning process, called “training,” builds a statistical model that captures how the data is structured. When given a prompt, the model predicts the most likely next words, shapes, sounds, or visuals -- creating new text, images, video, or audio based on what it has learned.

There are many generative AI products available, including OpenAI’s ChatGPT and Google Gemini (which Barnard users have access to as of August 2025). Using pre-trained large language models, tools like ChatGPT and Gemini take text prompts from users and produces responses that are supposed to mimic human writing in dialogue format. Notable features include its ability to answer questions, hold a conversation, summarize information, write computer code, among many other functions.

Gemini and ChatGPT’s language models are trained on very large datasets of text data, including websites, books, and other texts; these datasets are estimated to contain text in the order of trillions of words in multiple languages. The text included in the data can be labeled data, which includes annotations set by humans that guide the language model in categorizing data correctly, or unlabeled data (sometimes called “raw data”), which does not contain any annotations. OpenAI improved the model’s accuracy by having people evaluate and correct its analysis of the unlabeled data, enhancing its ability to produce written language.

Generative AI tools have a variety of limitations that are integral to understanding and working with the tool, including:

- Responses may have factually incorrect information.

- Output may reproduce biases that were present in the training data.

- Large language models (LLMs) may not grasp the context of the prompt, and is known to struggle with “common sense” knowledge, idioms, and sarcasm.

- Some GenAI tools can search the internet for information to reference in its answers while others cannot.

The following resources on generative AI in the college context may be helpful for learning about the technology and its implications for academic integrity, assignment design, student engagement, and the future of AI:

- "Discipline-specific Generative AI Teaching and Learning Resources" developed by University of Delaware Center for Teaching & Assessment of Learning.

- "Teach with Generative AI" from Harvard University has plenty of useful resources including the "Harvard GenAI Library for Teaching and Learning" and the System Prompt Library offering a range of effective prompts that can be used by educators.

The following suggestions could be helpful in experimenting with generative AI tools in teaching and learning. You can use them as you see relevant and appropriate in your classes.

- Consider how generative AI may be relevant and valuable for your course, your students, and your course’s learning outcomes could lead to creative and critical experimentation with a range of pedagogical approaches.

- Spend some time reflecting on your own thoughts about and possible experience with generative AI, whether in the educational context or elsewhere as an important first step as you consider experimenting with AI in your course or even just including generative AI as a topic around which to build course readings and discussions.

- Ask yourself the following questions

- What prior experience with generative AI do you have?

- What have your prior experiences with generative AI taught you, particularly in relationship to education and pedagogy?

- What questions do you have as you consider whether or not to integrate it into your courses?

- What additional information do you feel would be helpful?

- Discuss the above questions with your students to understand if and how they have been using generative AI products and what their own feelings are about the integration of generative AI products into course themes and practices. As some students may have familiarity with generative AI, making the students in your course active shapers of how generative AI is used and discussed in your course could be an important first step.

- Use resources such as Stanford’s very helpful selection of curated curricula on topics ranging from algorithms in everyday life to using and analyzing sentiment analysis to using generative AI models creatively to generate text-based or image-based ideas for artwork could be a good place to start to get ideas and to brainstorm your own ways in which specific resources and curricula may align with your learning goals.

- Spend some time getting acquainted with and experimenting with AI platforms relevant to their discipline. As noted in Cornell’s guide to generative AI, some faculty may explore its potential for creating sample problems, writing prompts, and quiz questions and for assisting in research tasks such as analyzing large data sets. Mollick and Mollick’s “Assigning AI: Seven Approaches for Students, with Prompts” (2023) offers several detailed prompts for different purposes (AI as mentor, tutor, coach, teammate), including example conversations with ChatGPT-4, and insight into the risks of each use. Are there possibilities for creative pedagogies and new approaches to student learning that you think generative AI might bring to any of your past courses and assignments? It could be helpful to review past assignments in your courses to consider to what extent, if any, revising some of those assignments to incorporate generative AI would contribute to your current course’s learning objectives.

- Prioritize course and assignment design that creates assignments intentionally and aligned with your learning objectives as you consider if and how generative AI will be integrated. Concentrating on the questions of what you want students to learn through your assignments by the end of your course and what skills and forms of expression students will demonstrate their learning through will help to guide to what extent, if any, generative AI will be included. Explore how Barnard faculty are reimagining their course policies, assignments, and activities to refocus on student learning and transparently communicate expectations to their students about the use of generative AI in this special feature.

- Incorporate student-led critical conversations about generative AI into your course as a structured course discussion, individual or group presentation, or debate. As discussed in this comprehensive resource on Teaching and Learning with Generative AI from Stanford, there is a range of topics that instructors and students can consider as ways to frame these conversations. These include: addressing intellectual property, human rights, and labor displacement in the context of how AI products are conceived of, developed, and employed; anthropomorphism and affective responses to AI products; bias, misinformation, and stereotyping as potential outputs of generative AI; considering new imaginaries of how generative AI and machine-learning can be conceived of and trained and how specific academic disciplines could contribute to realizing them. As this faculty guide to teaching with generative AI from Dartmouth suggests, media, visual, and information literacy are priorities within curricula across many fields, raising important questions about technology’s ethical, economic, and social considerations and repercussions. Encouraging discussion especially about generative AI’s role in media, visual, and information literacy could be particularly vital, especially as research on generative AI consistently stresses its potentially harmful role in rapidly spreading misinformation and its potential to harm vulnerable populations.

- Consider incorporating generative AI within brainstorming or drafting processes, if you are teaching a writing-centered course. As this article discusses, some instructors have found that ChatGPT can help reduce the anxiety of planning a first draft and thus leave more space for critical and creative thinking. “How ChatGPT Could Help or Hurt Students With Disabilities'' argues that one of ChatGPT’s possible benefits for students with disabilities is that students who find it hard to organize their thoughts may find it helpful to use the product to generate an opening paragraph for an essay as a way to overcome what may seem like the overwhelming task of filling a blank page. Another exercise to inspire critical thinking about the process of argument formation in writing is to have students use ChatGPT to generate an argument for a paper and then have the students critique it, annotate it, and rewrite the argument. Writing instructors may find the resource AI Text Generators and Teaching Writing: Starting Points for Inquiry particularly helpful as they consider if and how they want to experiment with generative AI.

- Consult these discipline-specific Generative AI teaching and learning resources as well as this report from Cornell on Generative AI for Education and Pedagogy that outlines its possible use in writing, performing arts, social science, STEM, and computer science courses. How generative AI may transform academic disciplines raises questions such as those posed in this recent article about social science research and that may be helpful for faculty from all disciplines to consider and to discuss in the classroom: how might research practices be adapted to benefit from Generative AI and how can research remain transparent while doing so?

- Consider how we want to critically and thoughtfully center your own agency as instructors and the agency of your students as you engage with these products. Former leader of Google’s ethical-AI team Timnit Gebru has discussed generative AI in the context of its seemingly unlimited potential and warns that it does not always center human agency. “It feels like a gold rush,” she says, “and a lot of the people who are making money are not the people actually in the midst of it. But it’s humans who decide whether all this should be done or not. We should remember that we have the agency to do that.”

- Have an open conversation with your students discussing what ChatGPT is and give some examples of how generative AI is being used in education generally. Consider what instructors and students need and want to know about how ChatGPT and related models are built, how they work, and their potential perils and possibilities (educational, ethical, environmental, labor, social, political).

- If you choose to incorporate generative AI in your course, consider foregrounding your permission to use generative AI in a conversation about citational practice and the ethics of transparency in research methods. Given that these products are new and conventions are still forming, you could use this opportunity to think collaboratively, critically, and creatively with your students about what scholarship and research will mean in the context of generative AI.

- Depending on the learning objectives for your course, you might consider discussing a report such as ChatGPT: Educational friend or foe? about the possibilities of and debates around ChatGPT and recent articles or podcasts (e.g., Suspicion, Cheating, and Bans: A.I. Hits America's Schools, These Women Tried to Warn Us about AI) could be helpful in sparking a class discussion about ChatGPT in the higher education context. Such a conversation could then lead into a more focused discussion about academic production and academic honesty in your course.

- Discuss students’ potentially diverse motivations for using ChatGPT or other generative AI software. Do they arise from stress about the writing and research process? Time management on big projects? Competition with other students? Experimentation and curiosity about using AI? Grade and/or other pressures and/or burnout? Invite your students to have an honest discussion about these and related questions. As stated in a recent article about ChatGPT-generated abstracts fooling scientists, solutions to the use of ChatGPT will likely be found by critically examining the pressures motivating students and scholars to use it rather than by focusing solely on preventing and detecting AI-generated work.

- Cultivate an environment in your course in which students will feel comfortable approaching you if they need more direct support from you, their peers, or a campus resource to successfully complete an assignment. To take steps toward cultivating this environment, you might include a question about generative AI on your introductory course survey to understand your students’ potential interest and concerns about the tool. You could also include a discussion of generative AI in your community agreements, including the impact of AI-generated work on the classroom community. Additionally, take the opportunity throughout the semester to invite students to meet with you during office/student hours to discuss concerns about deadlines, especially during midterms and finals.

- Spend some time discussing the definition (or definitions) of academic honesty and discuss your own expectations for academic honesty with your students. Be open, specific, and direct about what those expectations are.

- Students generally express interest in joining the conversation about the 'why' and 'how' of learning. Understanding this purpose and process is beneficial to all students and can be motivating and demonstrate respect for their agency in the learning process. Consider connecting your expectations for academic honesty to the purpose of your course learning goals or assignments. Why is academic honesty important for this kind of skill building or knowledge acquisition and how will your class prepare students for developing these skills through the difficult work of learning? You might frame this conversation in terms of the skills you hope students will develop and the importance or value of these skills for future learning in the field or discipline or for their lifelong learning in general.

- Review the College Honor Code with the students and explain any of your concerns about ChatGPT and other related GPT models in that context.

- Consider adding a statement to your syllabus such as, “Use of an AI text generator when an assignment does not explicitly ask or allow for it is plagiarism.”

- Clarify the extent of generative AI use in your course. Instructors across the College have different expectations and perceptions surrounding generative AI. It may thus be appropriate and useful to explain to your students that use of generative AI in your class and context does not extend to other classes. You may also wish to state that the Honor Code stipulates that it is the student’s responsibility to ask their instructors if ever they are unsure about whether or in what sense use of generative AI constitutes academic dishonesty.

- If you use peer review or evaluation in your course, consider a specific conversation about the implications of ChatGPT within this process. In addition to the fact that generative AI work can constitute academic dishonesty, what are the ethical implications of asking peers to review AI-generated work? If you do not use peer review or evaluation in your course, consider ways that you can engage students in reviewing each other’s work and inviting them to actively contribute to their community of learning and the discipline or profession at large.

- Prioritize reflection and growth in the learning process. This might take the form of supplemental process reflections, a writer’s memo to accompany a final paper or project, or a response to a metacognitive prompt after any assessment or activity. For exams or quizzes, consider offering partial credit if students show their work or supplemental reflection questions where students can explain how and where they got stuck on a question or problem and why. When possible, give students opportunities to revise and learn from errors. In general, focusing on process over product will deepen students’ intrinsic motivation and can deter them from academic dishonesty.

- Consider reviewing your course assignments and your criteria for evaluating them to ensure that progress and process are centered.

- The CEP’s Active Learning Guide, Creating an Engaging and Inclusive Classroom and Flipped Classrooms may offer helpful perspectives. Several active and flipped learning strategies, including in-class writing, collaborative coding, or purposeful group work (e.g., having students working in small groups in class to apply what they've learned in readings, viewings, or problem sets at home) can help to prioritize students’ creative and collaborative learning. In-class writing, brainstorming, or problem solving (e.g., debugging) can also encourage students to complete work they have already started in class and perhaps minimize the likelihood of their using or relying significantly on generative AI.

- Share our resource on Tackling Large Assignments with students to provide guidance around how to manage time and successfully plan for final course assignments.

- While the CEP does not recommend re-designing a course or assignment solely to prevent generative AI submissions, some (though not all) of the suggestions and assignment changes currently being proposed align with existing evidence-based practices for improving both learning and engagement and promoting higher-order thinking. If appropriate to your course, consider reviewing your existing assignments to emphasize process, reflection, and academic honesty.

- Though hand-written assignments may seem like a tempting alternative to typed papers or exam submissions, keep accessibility in mind; hand-written work, especially on timed exams, can be difficult for students with disabilities and students who use screen readers. For a recent consideration of the issue of timed exams in light of AI, see "AI text generation: Should we get students back in exam halls?"

- Though ChatGPT can be used to quickly summarize information, this tool is prone to inaccuracies, bias, and often hallucinations. Generative AI tools are also currently less well equipped for performing in-depth comparative analysis and synthesis assignments and assignments that draw on verifiable sources and quotations. Consider scaffolding an assignment by incorporating summary in low-stakes assignments or in-class activities (e.g., classroom discussions, compiled questions about a reading on Padlet or CourseWorks, concept maps), and then encouraging students to engage in higher-order thinking in written assignments, exams, or projects (e.g., synthesis of multiple texts, application of understanding to their personal lives or experiences, presentations, papers, or projects that build on prior research).

- If given enough personal information about a student, ChatGPT can simulate reflective writing or writing that appears to draw upon personal experience. Asking students to relate details from a reading, lecture, experiment, or class discussion to their personal lives can engage students in the material and help them see its relevance, but bear in mind that such writing can still be simulated.

- In older versions of ChatGPT (such as GPT-3), the model had no direct ability to connect to the internet in real-time. Instructors may consider this fact when adapting their course to incorporate more recent or less canonical texts within the syllabus or design assignments in different mediums or modes (e.g., concept map, collage, oral presentation, video, photo essay).

- When possible, scaffold your assignments to promote revision and growth over time, with opportunities for feedback from peers, TAs, and/or the instructor. Build assignment pre-writing or brainstorming into class time and invite students to share and discuss these ideas in small groups or with the class as a whole.

- If you and your students are comfortable engaging with generative AI (see privacy considerations below), consider experimenting with the tool in a low-stakes assignment (e.g., asking them to craft an essay, quiz, or code using the tool and then reflect on why and how the essay, quiz, or code could be changed to be more fully developed, factually accurate, or to run more efficiently or elegantly). These activities can also promote metacognition. Not sure if your students are comfortable using generative AI technology? Ask them about it on your initial course survey.

- Prior to introducing an assignment that may incorporate the use of generative AI, explore whether all students can afford to use these products, or if some students will be unfairly advantaged by access to paid versions. Be explicit with students regarding the products that are allowed within the class, and in what specific ways, to maintain an equitable learning environment. Bear in mind that not all students will be familiar with how to use this technology, and assignments incorporating generative AI may require additional support and teaching. Please also note that the College is exploring institutional licenses that would mitigate these equity concerns.

If you choose to incorporate generative AI into your course assignments, state explicitly how you would like students to cite their use of generative AI technology within their assignments or assessments or ask them to cite these products based on the citational system common within your discipline. The MLA has published explicit guidance on citing generative AI as has the APA. It is important to explicitly articulate your requested citation format or style in writing so students understand what is expected of them.

- Detection software is not necessarily a reliable means of checking for use of generative AI. For example, the commonly used program Turnitin which is incorporated into CourseWorks, has an AI detection tool; some faculty may have noticed an AI detection report included within their Similarity Report last semester. However, there are serious and acknowledged concerns about bias and reliability, including a higher risk for false positives than originally reported by the company. Unlike the Similarity Report, which students can also see, the AI detection report is only visible to instructors, which is another limitation. For these reasons and in keeping with the approach of the majority of our peer institutions, the Barnard Provost's Office, in consultation with ATLIS, DEI, and the CEP, has disabled the Turnitin's AI detections report.

- The CEP and ATLIS recommend caution as faculty consider experimenting with other AI detection software, given the limitations with this technology, and in particular surrounding bias against non-native English speakers. As is the case with any tool for detecting academic dishonesty, it is important that an AI detection report function as only one piece of evidence within a broader analysis of student work.

- ChatGPT may share account holders’ personal information with third parties as stated in their privacy policy and numerous articles have exposed how it replicates racist biases (see, “ChatGPT proves that AI still has a racism problem” and “The Internet’s New Favorite AI Proposes Torturing Iranians and Surveilling Mosques”, for example). Barnard's Computer Science department also hosted a panel in May 2021 featuring AI experts and activists titled, "Bias in AI: Why it’s a problem and what should be done about it?"

- It is important to be aware that ChatGPT’s potential sharing of personal information with third parties may raise serious privacy concerns for your students and perhaps in particular for students from marginalized backgrounds. For those interested in using ChatGPT and concerned about privacy, see OpenAI's latest settings for sharing chat history.

- The potential replication and reinforcement of racial, gender, and other biases along with Generative AI’s privacy and misinformation concerns make it imperative that instructors approach possible experimentation with Generative AI products deliberately and with great care. As this guide to Generative AI Systems in Education – Uses and Misuses stipulates, critical research, self-reflection, and consideration of one’s course goals and expectations are necessary before including Generative AI in the classroom to ensure that all students and particularly those from marginalized backgrounds are supported.

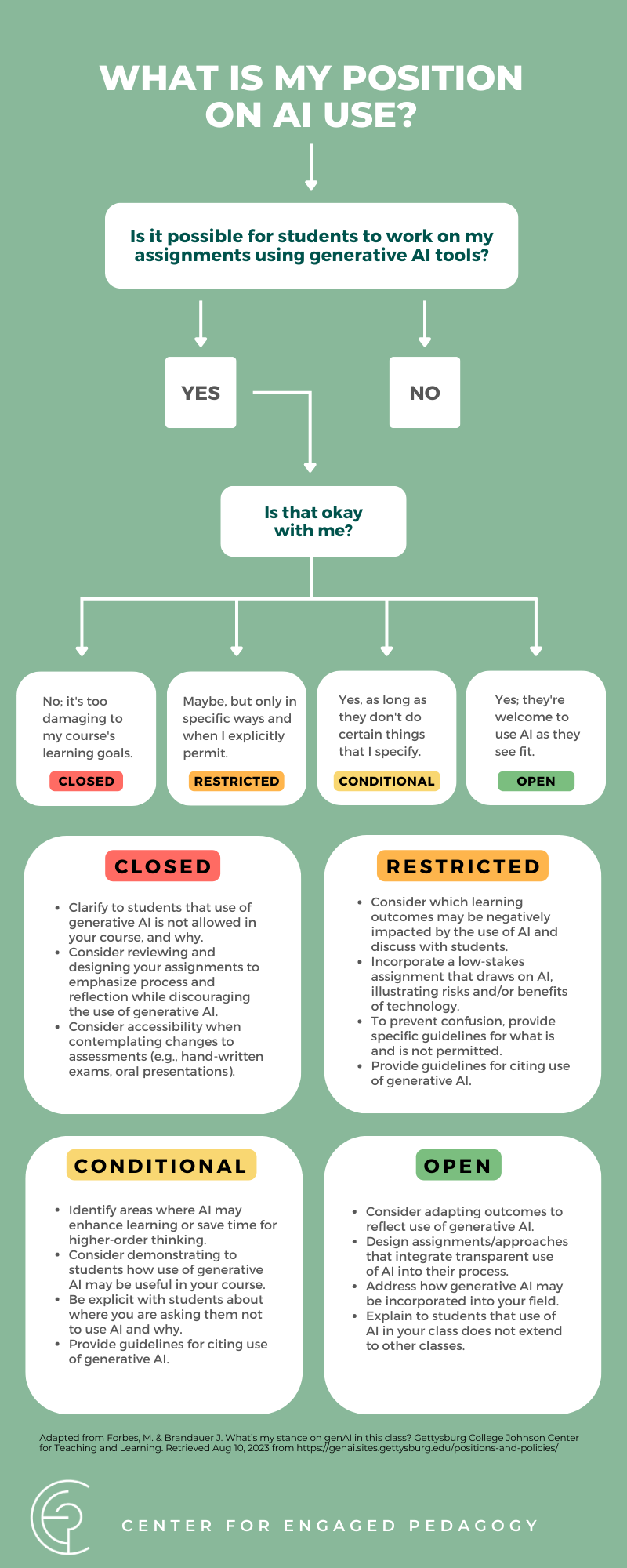

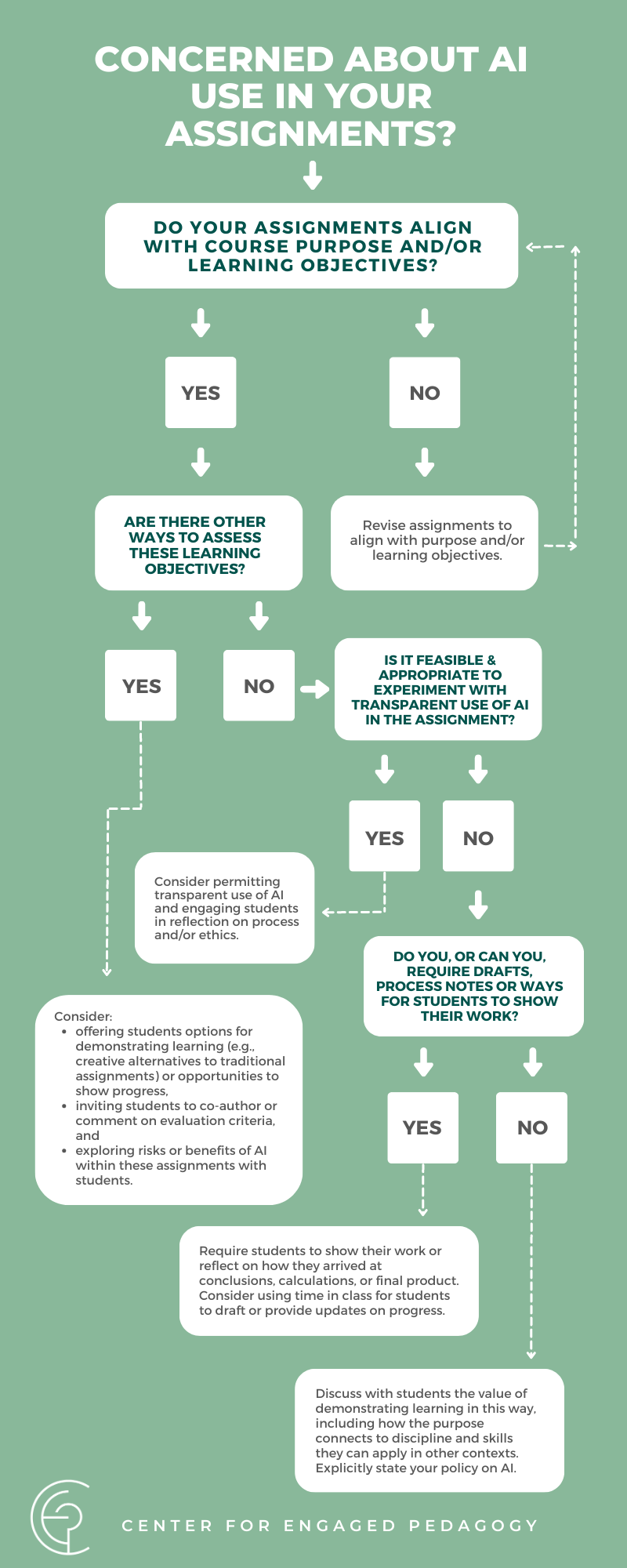

Making Decisions About Generative AI

These two resources can be used to guide your decision on whether or not you will incorporate generative AI in your classroom. Access a larger version of infographics here and view a pdf version here. Download as simple text PDF.

Example Syllabi Statements

This section includes examples of syllabus statements on generative AI. There are a variety of ways in which faculty might respond to this new and continually developing technology, and instructors can use syllabi statements to define expectations for generative AI in their courses. Course policies across higher education reflect a spectrum of positions regarding students’ use of AI tools. Some instructors allow students to engage with AI in some specified way, and others forbid the use of AI products altogether. What these statements share is an acknowledgement of AI as a reality within our teaching and learning contexts and an explicit explanation of the instructor’s approach or policy. While a syllabus statement is only one way of addressing your approach to AI, the process of drafting your policy, and explicitly connecting that policy to the purpose, skills, and big ideas of your course, may also help you reflect on elements of your course design and clarify your pedagogical goals. In addition to the statements below, instructors might benefit from exploring the Classroom Policies for Generative AI Tools resource, which includes several examples of digital transparency statements across the disciplines and from various institutions.

For instructors who wish to forbid generative AI in their classes:

“Our academic community depends on integrity, shared responsibility, and academic honesty. All work in this course must be your original work and completed in accordance with the College’s Honor Code. You may not use ChatGPT or other generative AI software at any stage or in any phase in any type of work in this course, even when properly attributed. [Insert discipline- or context-specific reasons for this, including skill building, concerns regarding equity, etc.] If you have questions about what is permissible at any point in the semester, please reach out to me.”

For instructors who are open to students using generative AI in some way:

“We may incorporate ChatGPT and other generative AI software during this course [here the specific uses could be noted depending on the course and discipline]. Students will be informed about when, where, and how such tools are permitted to be used for class work and assignments, along with specific instructions for attribution. Outside of these approved uses, ChatGPT and other generative AI software are not permitted and must be specifically approved by the instructor. [If you would like them to cite these tools: Any and all use of ChatGPT and other AI software at any stage of completing assignments for this course must be properly cited in your work using [insert style guide or approach to citation]; neglecting to do so may constitute a violation of the College’s Honor Code.] If you have questions about what is permissible at any point in the semester, please reach out to me. Please also note that this policy applies only to my class, and it is your responsibility to check with each instructor if ever you are unsure about what constitutes academic honesty in their class.”

A Few Words about Generative AI (e.g. ChatGPT)

Writing is integral to thinking. It is also hard. Natural language processing (NLP) applications like ChatGPT or Sudowrite are useful tools for helping us improve our writing and stimulate our thinking. However, they should never serve as a substitute for either. And, in this course, they cannot.

Think of the help you get from NLP apps as a much less sophisticated version of the assistance you can receive (for free!) from a Bentley Writing Center tutor. That person might legitimately ask you a question to jump-start your imagination, steer you away from the passive voice, or identify a poorly organized paragraph, but should never do the writing for you. A major difference here, of course, is that an NLP app is not a person. It’s a machine which is adept at recognizing patterns and reflecting those patterns back at us. It cannot think for itself. And it cannot think for you.

With that analogy in mind, you will need to adhere to the following guidelines in our class.

Appropriate use of AI when writing essays or discussion board entries

- You are free to use spell check, grammar check, and synonym identification tools (e.g., Grammarly, and MS Word)

- You are free to use app recommendations when it comes to rephrasing sentences or reorganizing paragraphs you have drafted yourself

- You are free to use app recommendations when it comes to tweaking outlines you have drafted yourself

Inappropriate use of AI when writing essays or discussion board entries

- You may not use entire sentences or paragraphs suggested by an app without providing quotation marks and a citation, just as you would to any other source. Citations should take this form: OpenAI, chatGPT. Response to prompt: “Explain what is meant by the term ‘Triple Bottom Line’” (February 15, 2023, https://chat.openai.com/).

- You may not have an app write a draft (either rough or final) of an assignment for you

Evidence of inappropriate AI use will be grounds for submission of an Academic Integrity report. Sanctions will range from a zero for the assignment to an F for the course.

I’m assuming we won’t have a problem in this regard but want to make sure that the expectations are clear so that we can spend the semester learning things together—and not worrying about the origins of your work.

Be aware that other classes may have different policies and that some may forbid AI use altogether.

Dongwook Yoon: CPSC 344

344 Policy on the Use of AI Content Generators for the Coursework (ver 1.1, Jan 18, 2023)

Dear CPSC 344 students,

I am writing to inform you that the use of AI-based content generation tools, or AI tools, is permitted for assignments and project work in CPSC 344. However, it is not allowed during midterm exams and the final. Additionally, students are required to disclose any use of AI tools for each assignment. Failure to follow this policy will be considered a violation of UBC’s academic policy.

I view AI tools as a powerful resource that you can learn to embrace.

The goal is to develop your resilience to automation, as these tools will become increasingly prevalent in the future. By incorporating these tools into your work process, you will be able to focus on skills that will remain relevant despite the rise of automation. Furthermore, I believe that these tools can be beneficial for ESL students and those who have been disadvantaged, allowing them to express their ideas in a more articulate and efficient manner.

We are aware that there are risks involved in allowing the use of AI tools in your assignment deliverables.

Therefore, we ask that you read this carefully and use the tools responsibly.

Firstly, it is important to note that AI tools are susceptible to errors and may incorporate discriminatory ideas in their output. As a student, it is your responsibility to ensure the quality and appropriateness of the work you submit in this course.

Secondly, please be mindful of the data you provide to these systems, as your assignments contain private information, not just your own but also that of others. For example, you should never enter the names of your study participants into ChatGPT.

Thirdly, there is a risk of inadvertently plagiarizing when using these tools. Many AI chatbots and image generators create content based on existing bodies of work without proper citation. Our plagiarism policy will apply to all assignment submissions, and “AI did it!” will not excuse any plagiarism. To prevent this, you can consider using more responsible tools that cite their data sources, such as Perplexity AI.

Lastly, be aware of the dangers of becoming overly dependent on these tools. While they can be incredibly useful, relying on them too much can diminish your own critical thinking and writing skills.

For every assignment submission, you are required to complete a form called the “AI use disclosure.”

Submitting this disclosure will help us understand and mitigate the risks associated with the use of AI tools in the course. The form will ask about your use of AI tools for the assignment and the extent to which you used them. You will be asked to submit the disclosure via Canvas.

If you do not wish to use these tools, that is a valid decision.

The use of AI tools in education can be messy and unpredictable due to the risks mentioned earlier. Some students may have moral confusion or concerns about the uncertainty associated with using AI tools in their coursework. If you do not wish to use them, that is a valid decision. This policy aims to anticipate and mitigate any potential harms associated with AI tool usage, rather than promoting their use.

We will not mark you down for the use or non-use of AI tools.

Grading will be done based on the rubric on an absolute scale, so students who do not use AI tools will not be at a disadvantage. Also, the use of AI tools will not negatively impact your grade. The instructor will be the only one with access to the submitted AI use disclosures, and TAs will handle the majority of grading.

Please note that the policy about the use of AI tools in CPSC 344 is up for change as the term progresses.

Best,

Dongwook Yoon

The instructor of CPSC 344 in 2023 Spring.

Computer Science

UBC, Vancouver

Additional Resources & References

Brookfield, S. (2012). Teaching for Critical Thinking: Tools and Techniques to Help Students Question Their Assumptions. Jossey-Bass.

Classroom Policies for Generative AI Tools [An open-source repository of sample digital transparency language and policies from higher education]

Columbia University Center for Teaching & Learning. (n.d.). Considerations for AI Tools in the Classroom. https://ctl.columbia.edu/resources-and-technology/resources/ai-tools/

Cornell University. (2023). CU Committee Report: Generative Artificial Intelligence for Education and Pedagogy. https://teaching.cornell.edu/generative-artificial-intelligence/cu-committee-report-generative-artificial-intelligence-education

Columbia University Center for Teaching & Learning. (n.d.). Metacognition. https://ctl.columbia.edu/resources-and-technology/resources/metacognition/

Cornell University Center for Teaching Innovation. (2023). Generative Artificial Intelligence. https://teaching.cornell.edu/generative-artificial-intelligence

Electronic Privacy Information Center. (2023). Generating Harms: Generative AI’s Impact & Paths Forward. https://epic.org/wp-content/uploads/2023/05/EPIC-Generative-AI-White-Paper-May2023.pdf

Fisher, A. (2011). Critical Thinking: An Introduction. Cambridge University Press.

Grossmann, I., Feinberg, M., Parker, D. C., Christakis, N. A., Tetlock, P. E., & Cunningham, W. A. (2023). "AI and the transformation of social science research: Careful bias management and data fidelity are key." Science, 380, 1108-1109. https://www.science.org/doi/10.1126/science.adi1778

Harris, J. (2023, May 22). "‘There was all sorts of toxic behaviour’: Timnit Gebru on her sacking by Google, AI’s dangers and big tech’s biases." The Guardian. https://www.theguardian.com/lifeandstyle/2023/may/22/there-was-all-sorts-of-toxic-behaviour-timnit-gebru-on-her-sacking-by-google-ais-dangers-and-big-techs-biases?CMP=share_btn_url

Heaven, W. (2023, April 6). "ChatGPT is going to change education, not destroy it." MIT Technology Review. https://www.technologyreview.com/2023/04/06/1071059/chatgpt-change-not-destroy-education-openai/

Hogan, K., & Pressley, M. (Eds.). (1997). Scaffolding Student Learning: Instructional Approaches and Issues. Brookline Books.

Hughes, A. (2023, February 2). CHATGPT: Everything you need to know about OpenAI's GPT-3 tool. BBC Science Focus Magazine. https://www.sciencefocus.com/future-technology/gpt-3/

McMurtrie, B. (2023, May 26). "How ChatGPT Could Help or Hurt Students With Disabilities." Chronicle of Higher Education. https://www.chronicle.com/article/how-chatgpt-could-help-or-hurt-students-with-disabilities

Mills, Anna and Lauren M.E. Goodlad (2023). Adapting College Writing for the Age of Large Language Models such as ChatGPT: Some Next Steps for Educators. Critical AI. https://criticalai.org/2023/01/17/critical-ai-adapting-college-writing-for-the-age-of-large-language-models-such-as-chatgpt-some-next-steps-for-educators/

Mills, A. (Curator). (2023, February 14). "AI Text Generators and Teaching Writing: Starting Points for Inquiry." https://wac.colostate.edu/repository/collections/ai-text-generators-and-teaching-writing-starting-points-for-inquiry/

Mollick, E. and Mollick, L. (2023, June 12). Assigning AI: Seven Approaches for Students, with Prompts https://ssrn.com/abstract=4475995

Montclair State University Office for Faculty Excellence. (2023, Jan 17). Practical Responses to ChatGPT. https://www.montclair.edu/faculty-excellence/practical-responses-to-chat-gpt/

O'brien, M. (2023, January 6). Explainer: What is chatgpt and why are schools blocking it? AP NEWS. https://apnews.com/article/what-is-chat-gpt-ac4967a4fb41fda31c4d27f015e32660

OpenAI API. (n.d.). https://platform.openai.com/docs/chatgpt-education

OpenAI. (2023, February 2). CHATGPT: Optimizing language models for dialogue. OpenAI. Retrieved February 4, 2023, from https://openai.com/blog/chatgpt/

Rouse, M. (n.d.). What is chatgpt? - definition from Techopedia. Techopedia.com. https://www.techopedia.com/definition/34933/chatgpt

Stanford University Center for Teaching and Learning. (2022). Teaching and Learning with Generative AI. https://docs.google.com/document/d/1la8jOJTWfhUdNna5AJYiKgNR2-54MBJswg0gyBcGB-c/edit#heading=h.l53ludoiszvz

Stanford Graduate School of Education. (2022, Dec 20). Stanford faculty weigh in on ChatGPT's shake-up in education. https://ed.stanford.edu/news/stanford-faculty-weigh-new-ai-chatbot-s-shake-learning-and-teaching

Stanford University Graduate School of Education. (2022) Classroom-Ready Resources About AI For Teaching. https://craft.stanford.edu/resources/

Sydorenko, I. (2020, September 1). Unlabeled data in machine learning. High quality data annotation for Machine Learning. https://labelyourdata.com/articles/unlabeled-data-in-machine-learning

UC Berkeley Center for Teaching & Learning. (n.d.).Valuing Process as Equal To, or Greater Than, Product. https://teaching.berkeley.edu/valuing-process-equal-or-greater-product

University of Connecticut Center for Excellence in Teaching and Learning. (n.d.). Critical Thinking and other Higher-Order Thinking Skills. https://cetl.uconn.edu/resources/design-your-course/teaching-and-learning-techniques/critical-thinking-and-other-higher-order-thinking-skills/

University of Calgary Taylor Institute for Teaching and Learning. (2023). Teaching and Learning with Artificial Intelligence Apps

University of Delaware Center for Teaching & Assessment of Learning. (2023). Discipline-specific Generative AI Teaching and Learning Resources. https://docs.google.com/document/d/1lAFHJO6iffMyi5ar0jqZrjf_UL5vB443CBCrms-jIgQ/edit#heading=h.70ovjca47bg9

What is Generative AI? McKinsey & Company. (2023, January 19). https://www.mckinsey.com/featured-insights/mckinsey-explainers/what-is-generative-ai